Lightweight personal AI assistant (~23k LOC). Chat via Telegram or Discord, run coding agent teams, schedule jobs, sandbox commands in containers, approve risky actions — all from one tiny self-hosted service.

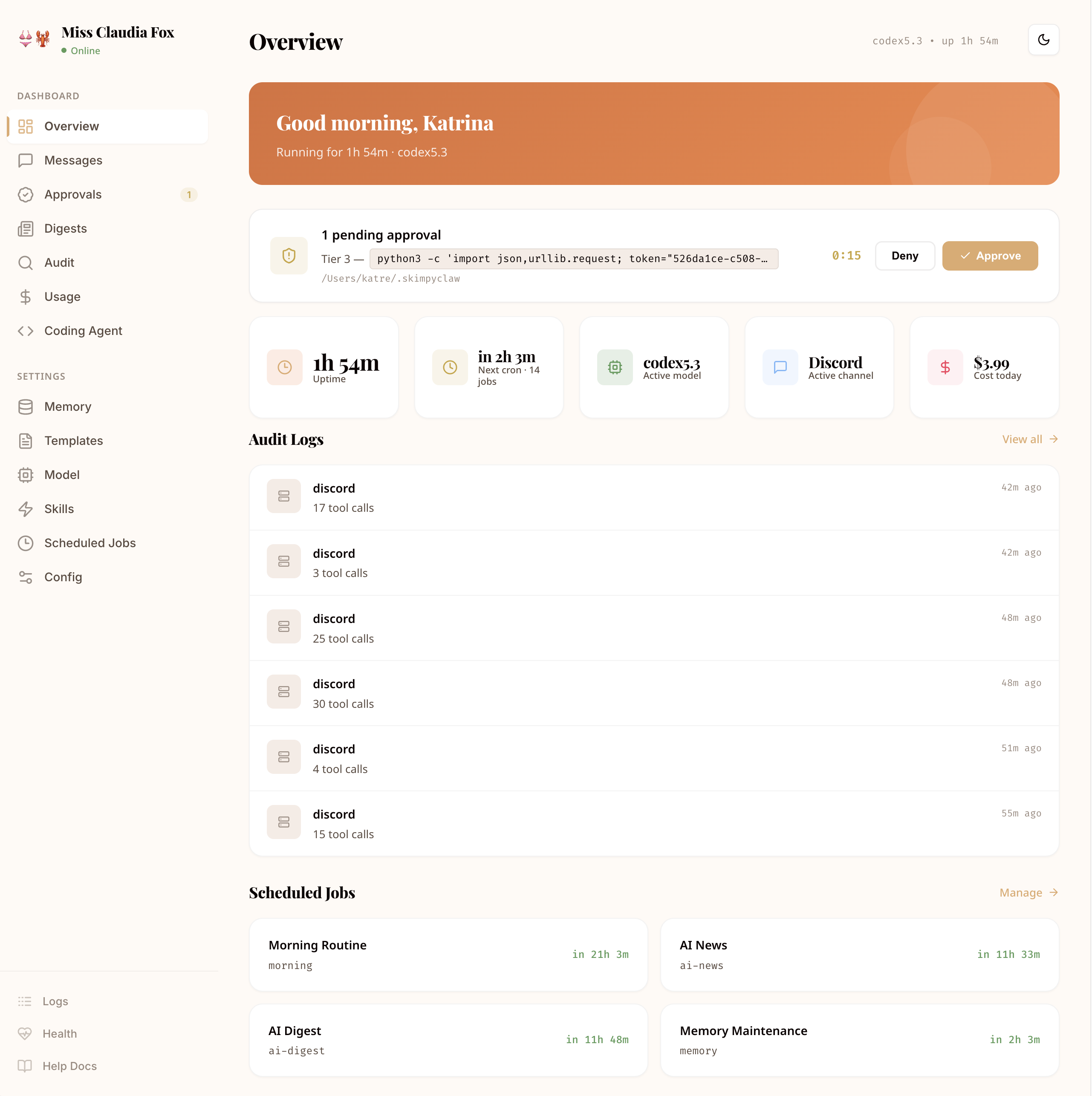

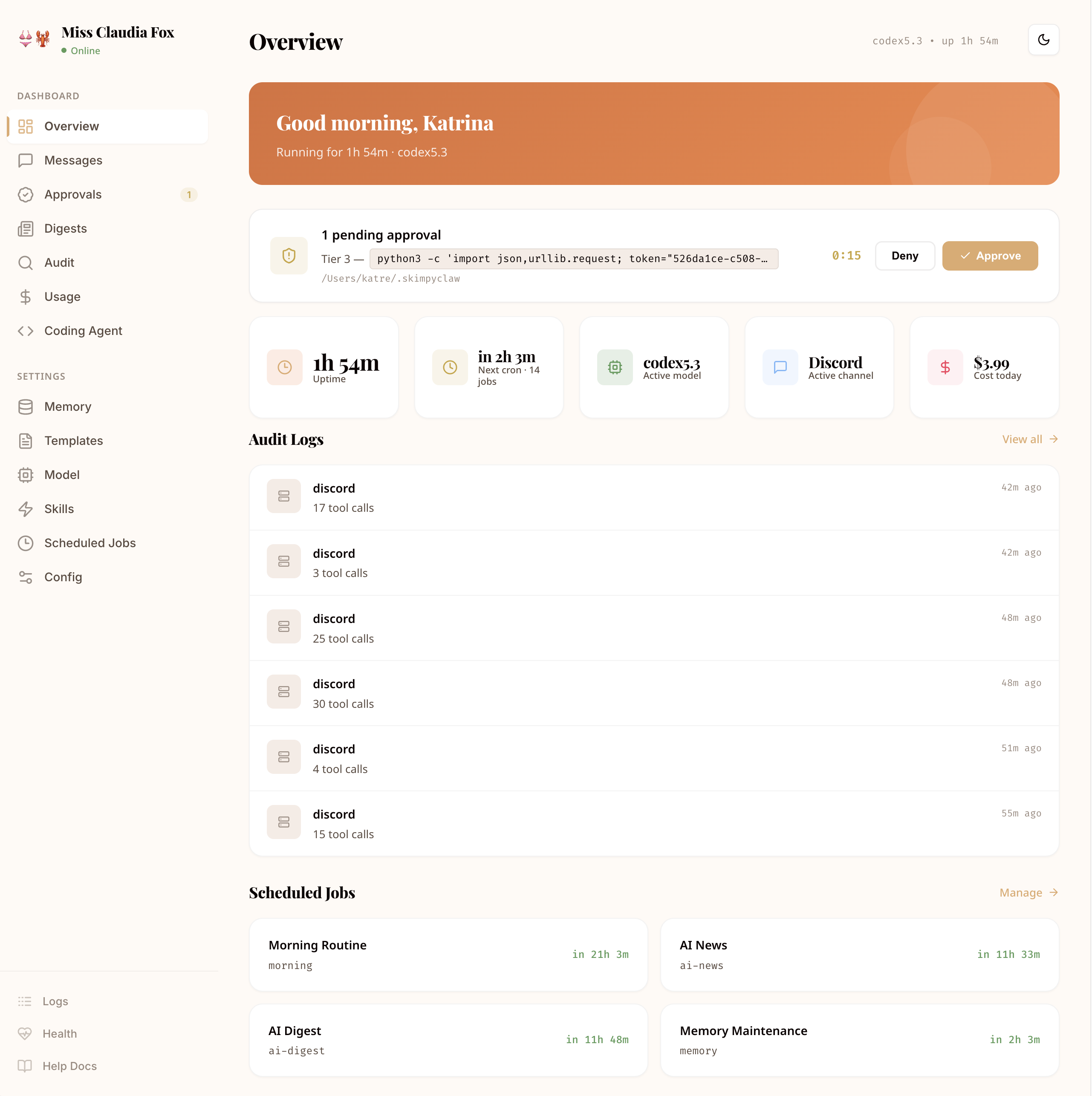

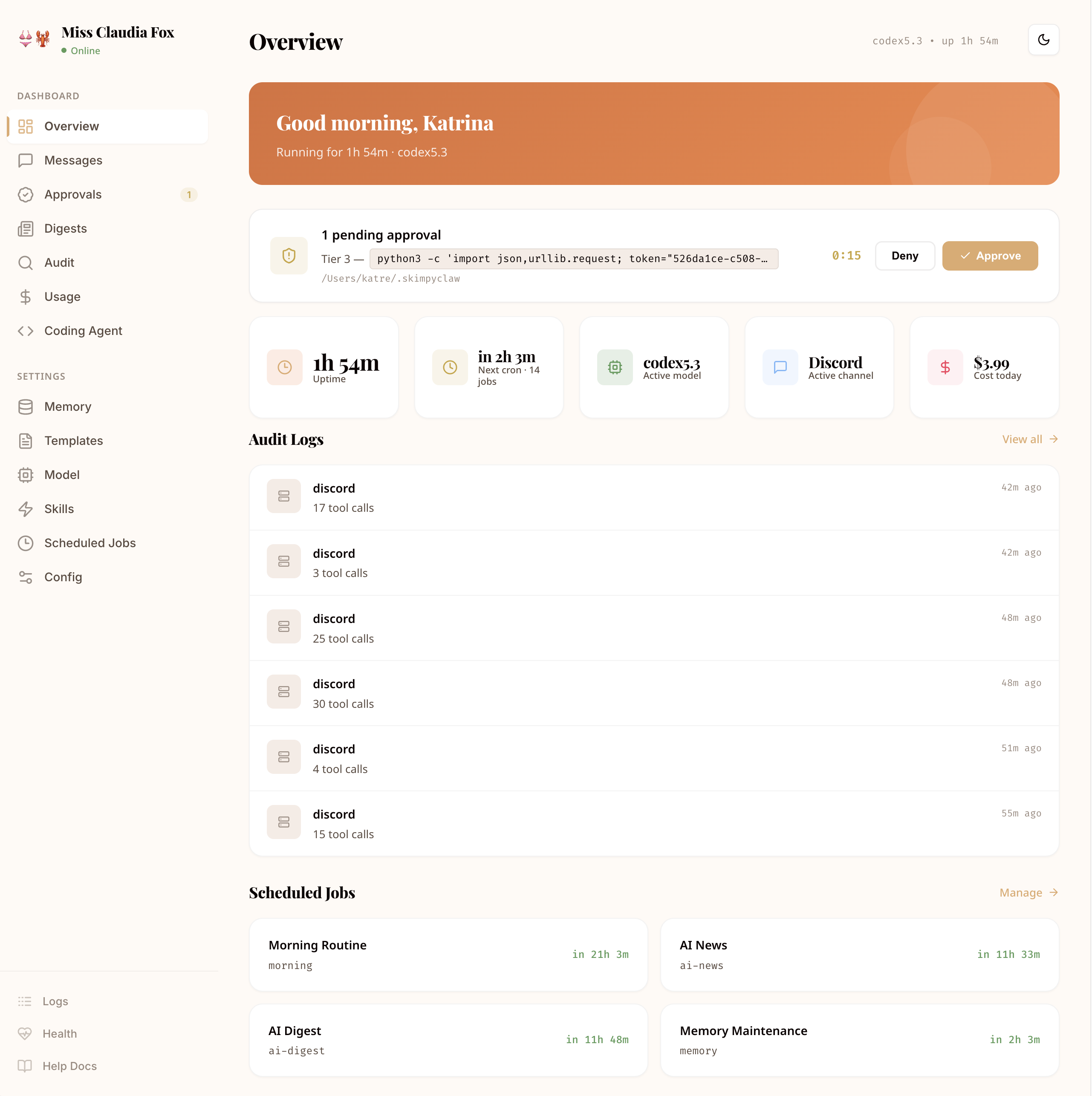

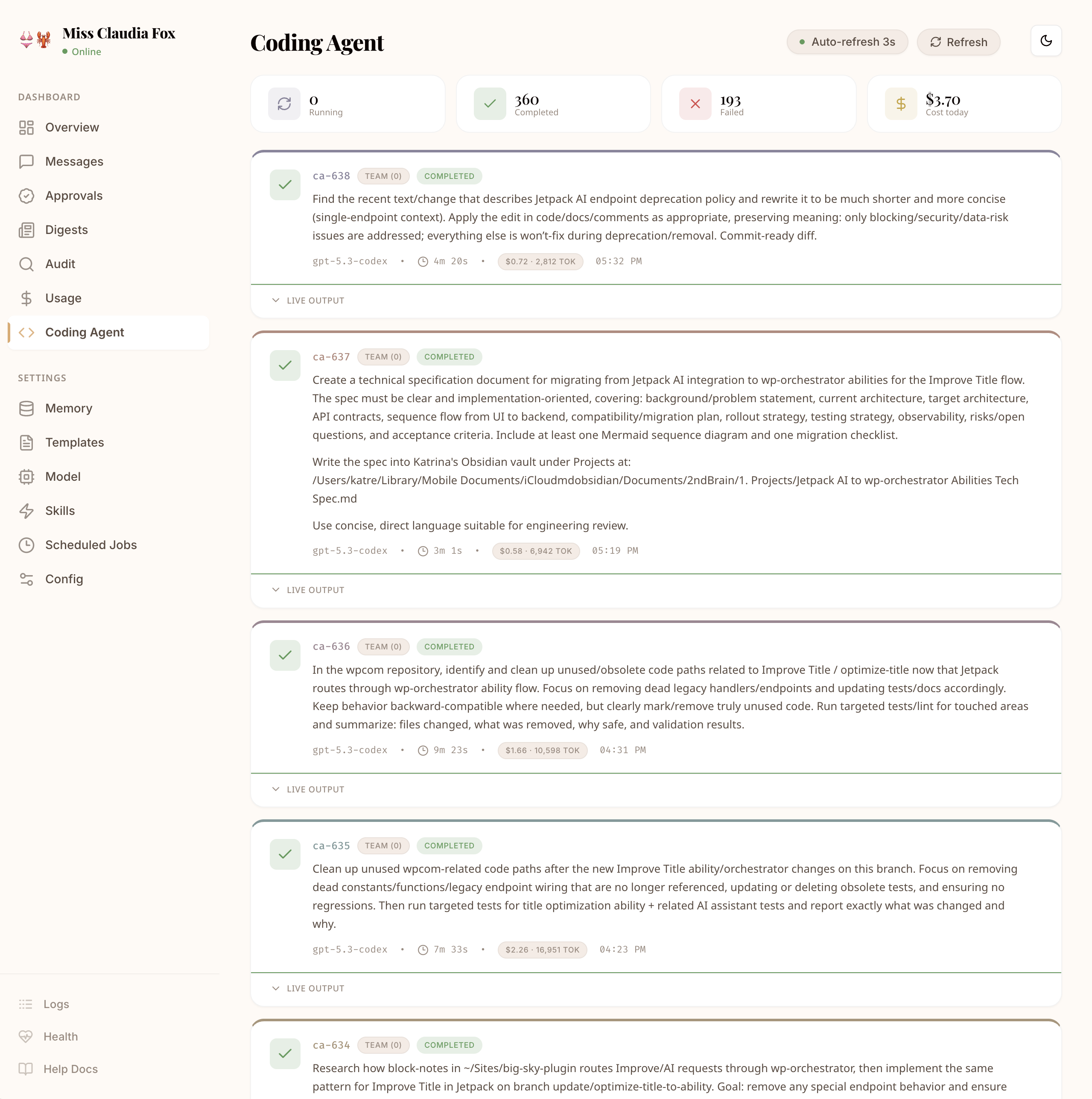

Every tool call, LLM request, approval decision, and cron execution is logged with timestamps, token counts, and cost data. Nothing happens in the dark.

Decompose tasks across multiple AI coding agents — Claude, Codex, Kimi — that work in parallel. Each agent writes code, runs tests, and reports back through structured validation gates.

Run agent commands in isolated containers instead of on your host. Bash, file reads, writes, and directory listings all execute inside a sandboxed environment — the agent can install packages and run scripts without risk to your machine.

skimpyclaw sandbox init

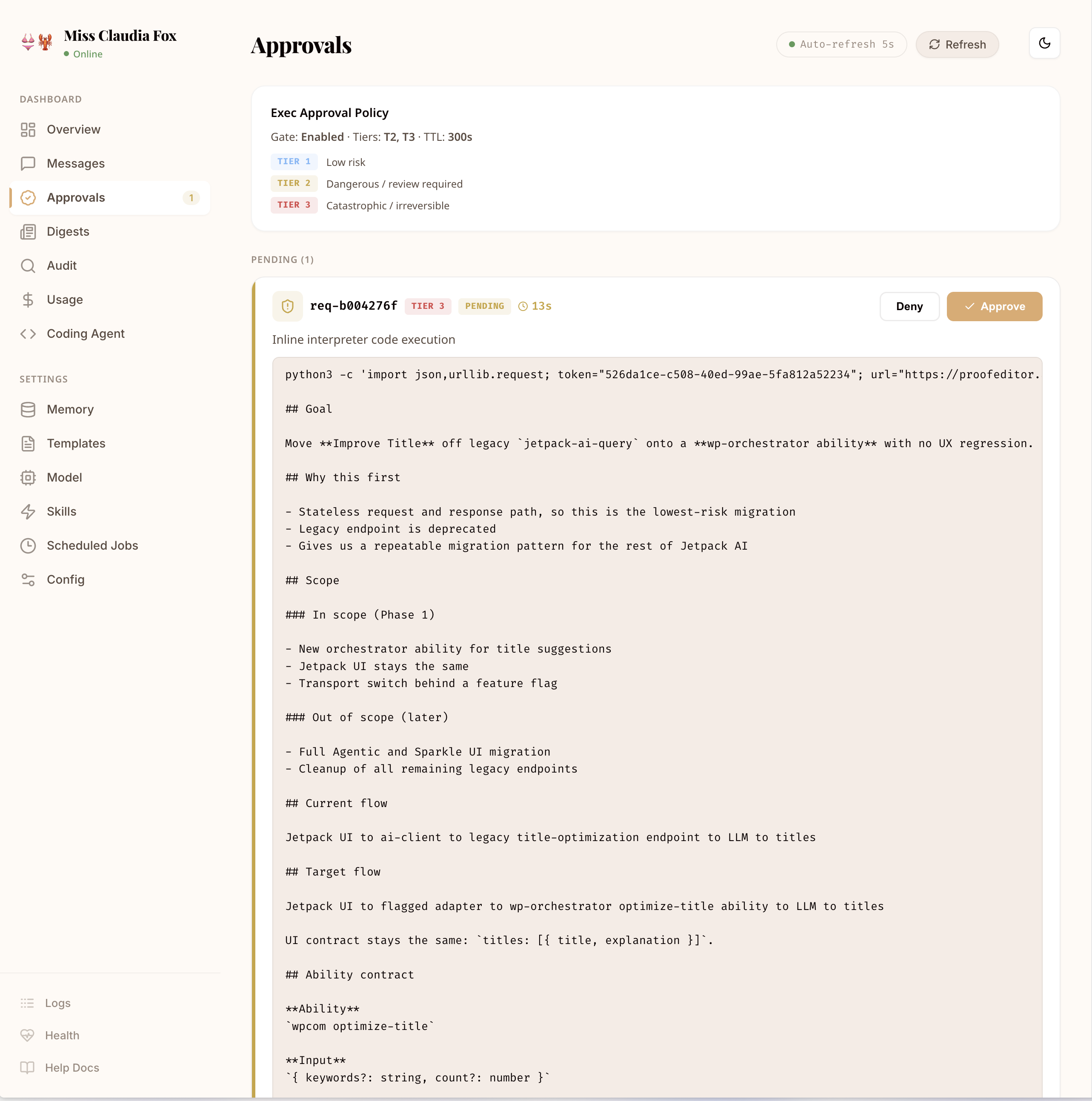

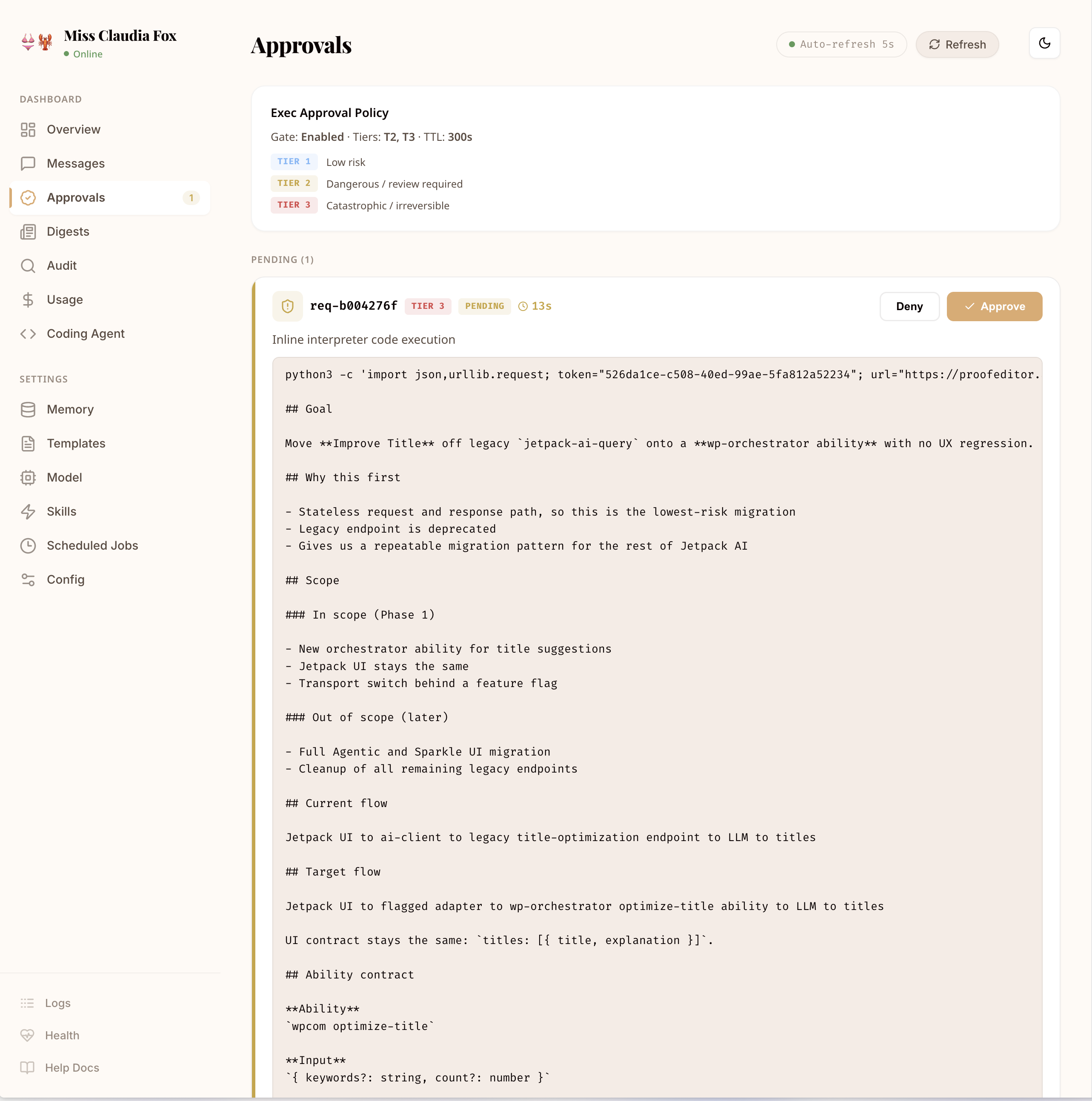

Tier-based risk classification for every bash command. Low-risk commands run automatically. Dangerous ones require explicit human approval via Telegram or Discord before execution — even inside the sandbox.

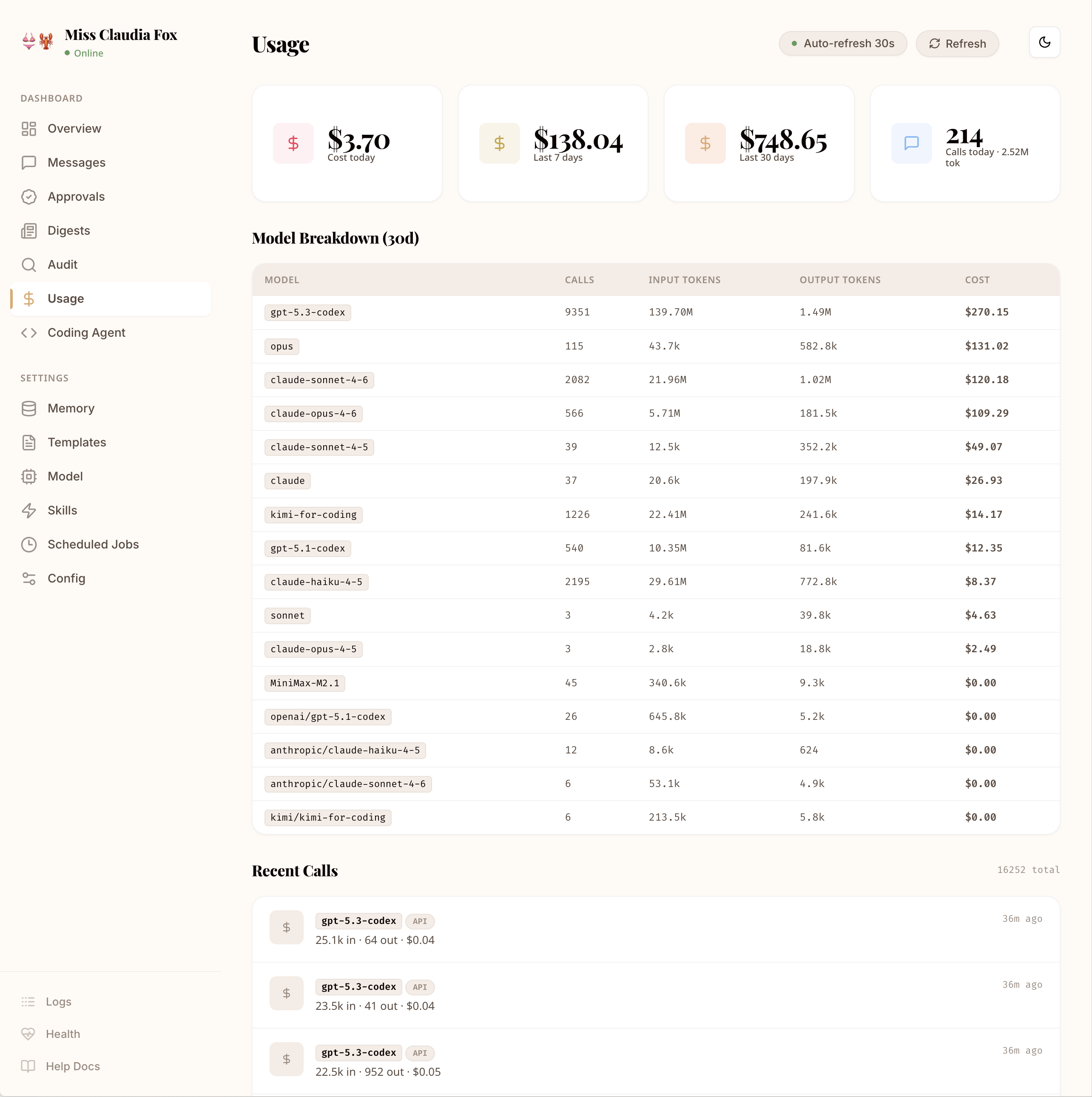

Track every token, every API call, every dollar. Usage summaries and per-model cost breakdowns in the dashboard — so you always know what your AI assistant is spending.

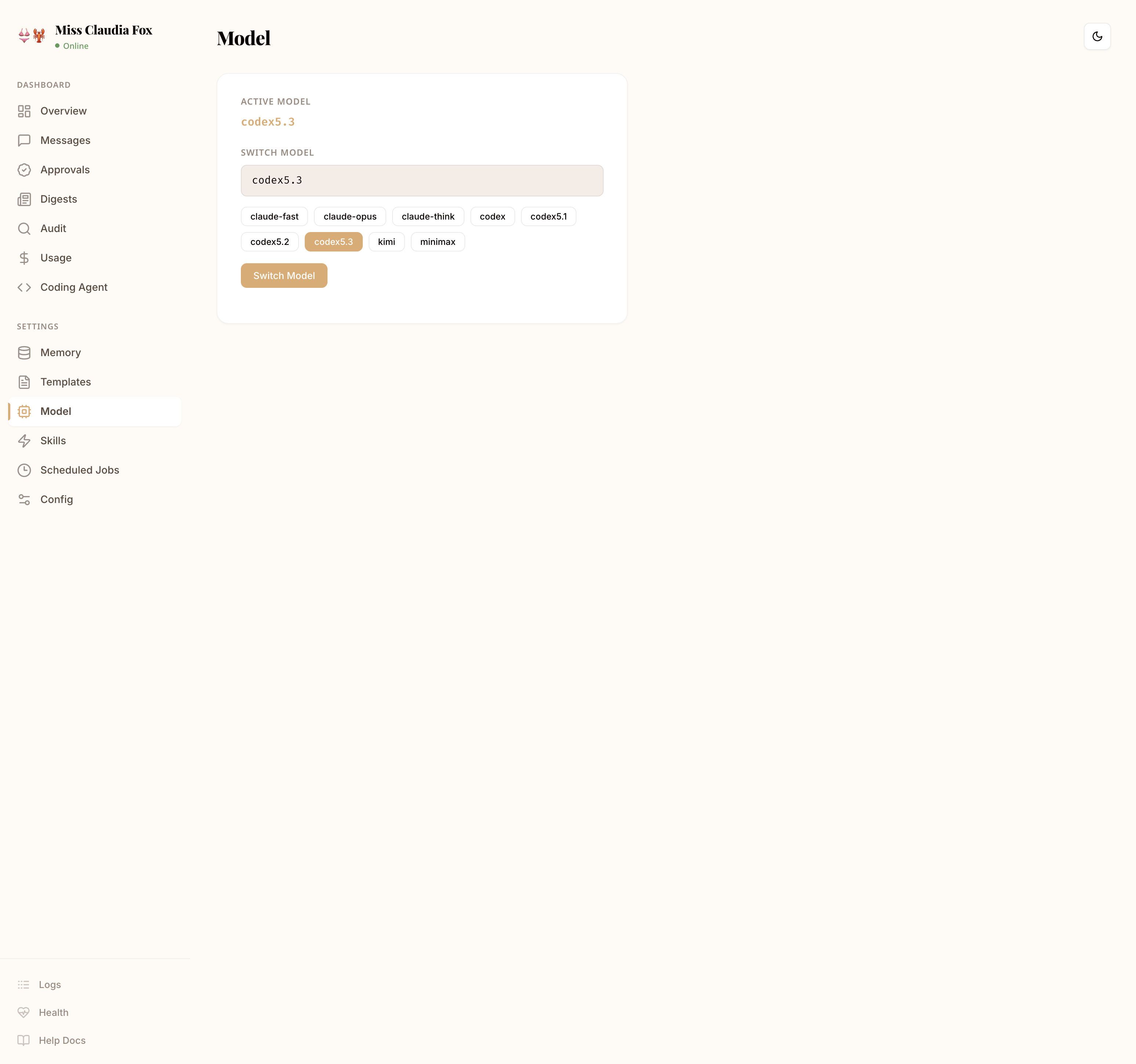

Not locked into one provider. Use Anthropic, OpenAI (via Codex), Kimi, MiniMax, or any OpenAI-compatible API like OpenRouter. Set model defaults per agent or override per cron job.

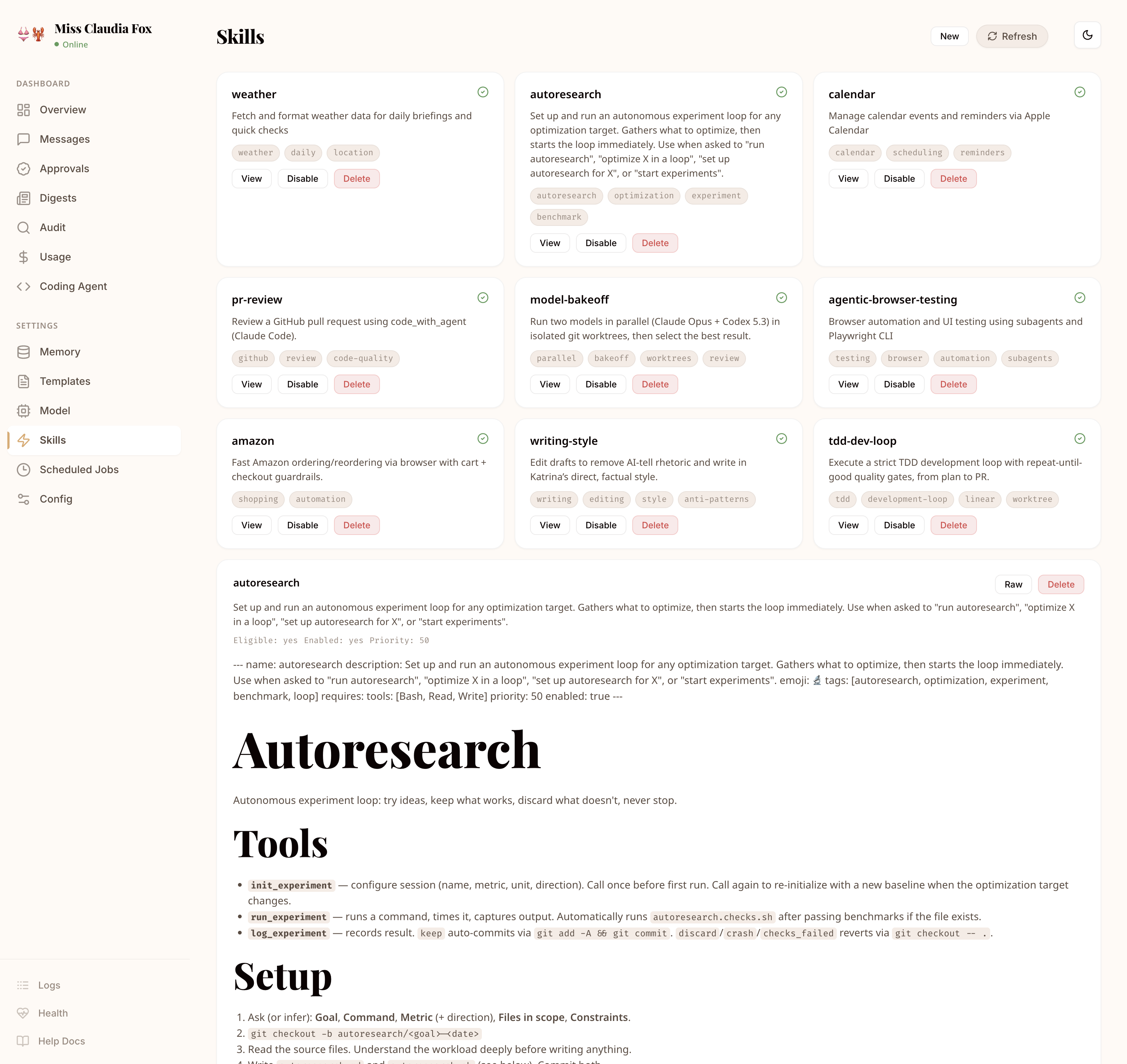

Write skills as simple markdown files with trigger patterns. Skills can invoke bash, file I/O, browser automation, MCP tools, or any combination — activated automatically when patterns match.

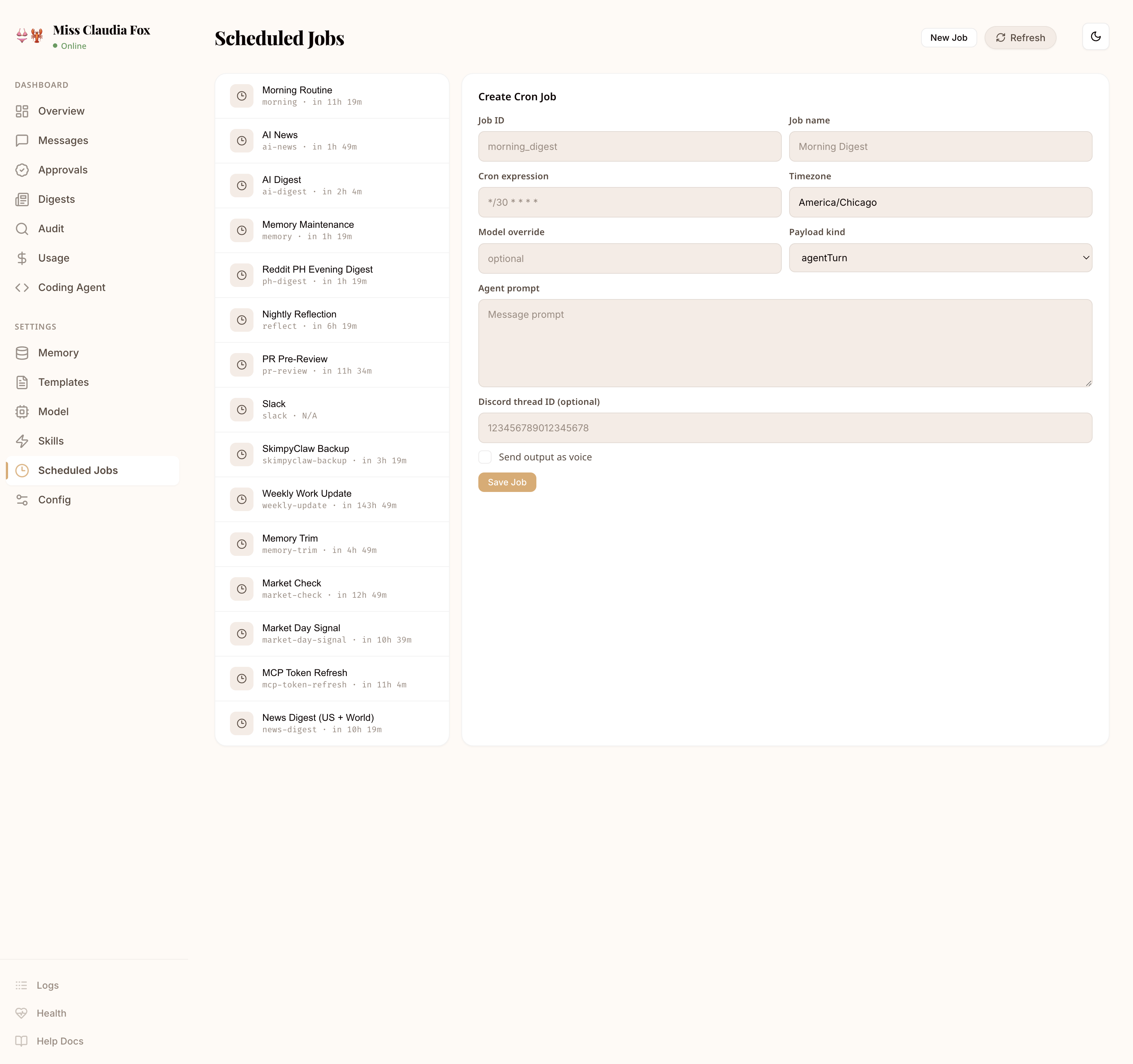

Run agent prompts or shell scripts on any schedule. Morning digests, nightly backups, and weekly reports are configured in cron and can be triggered on demand from the dashboard.

Install globally, onboard, and start the daemon.

$ pnpm add -g skimpyclaw

$ skimpyclaw onboard

$ skimpyclaw start --daemon